Series Overview

Hello folks! I know I had promised the finish of the transformer series, but it’s taking me a little more time to formulate the post than I expected. In lieu of that post, I’m going to begin another series that I’ve always wanted to dive into.

“Nature’s Optimizers” is a blog post series revolving around the different ways natural phenomena (from biology to physics) optimize in complex parameter spaces, and their cross-application to modern-day computing. It’s a common misconception for us, as humans, that our supercomputers and vast compute power make us the most powerful optimizers on the planet. After all, what could be more efficient than the delicately balanced, finely tuned machine that is human society?

Turns out, a lot of things. Nature solved the problem of finding optimal solutions long before we trained our gradient descent algorithms on it. Nature-inspired computing, which uses algorithms derived from studies on natural phenomena, is often underappreciated and I hope to shed some light on the subject through this series.

Another Pop Culture Trope

If you’ve ever watched the 1954 horror movie “Them!“, you might already be familiar with the concept of ants taking over the world. In the case of the movie, the ants = spoiler alert - were mutated into giant versions of themselves by nuclear testing carried out by the government.

Though this might seem like pure science fiction, the fact is that ants are well-poised for world domination. Since ancient times, scientists and philosophers alike have marveled at the unparalleled efficiency of ant colonies. The clear division of labor, singular focus on survival, and unending desire for conquest may well have served as a blueprint by which early civilization developed.

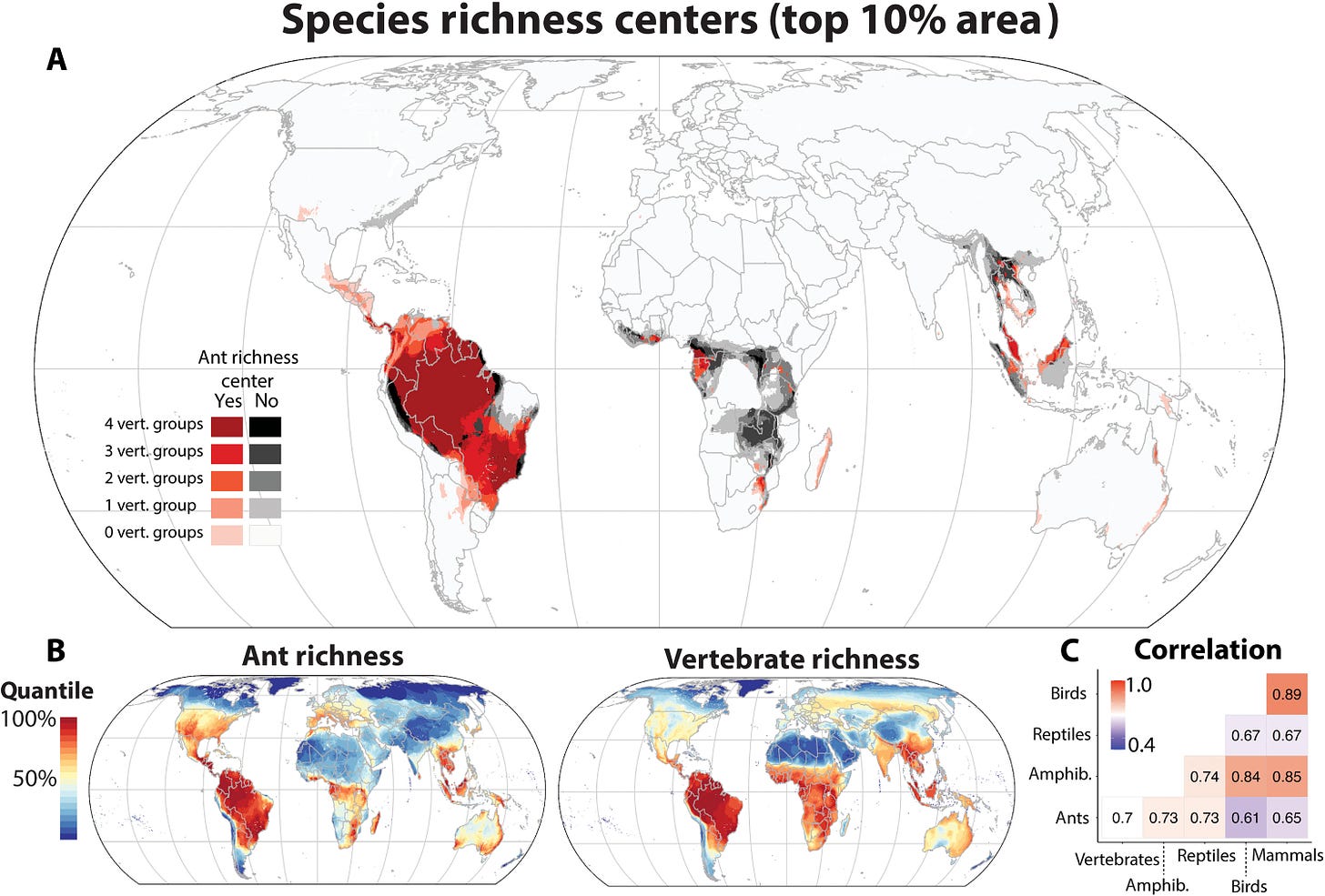

There are more than 12,000 species of ants in practically every vestige of the world, many of which are deemed far too inhospitable for humans. In ant-lover circles, it is widely accepted that ant supercolonies already control ecosystems spanning large swathes of entire continents. These colonies ravage any and all opposition. Even in resource-starved burning deserts or frigid tundras, ant colonies are extremely efficient seeking, acquiring, and allocating food.

But this post isn’t about ants. It’s about how swarms of ants coordinate in a way to maximize overall performance. This concept is known as Swarm Intelligence (SI).

Ant Colony Optimization

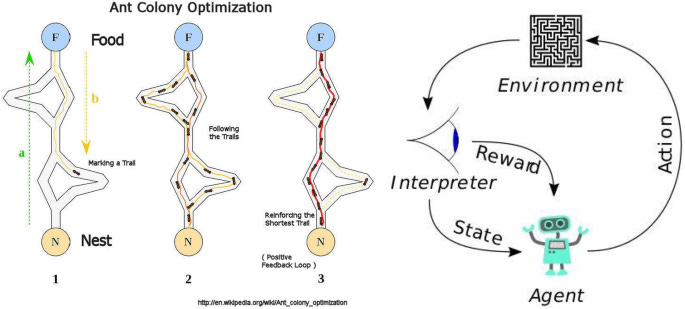

Imagine you have a colony of 1000 ants centered at a rock in the forest. Your primary food source is tasty beetle larvae, which hide underneath the other rocks in the forest. Rocks are widely dispersed throughout the forest, and some rocks might have much more food under them than others. At the start, you don’t know anything about the distribution of rocks and food. However, you do have some ants that you can send out. Ants can signal to other ants by laying down pheromones. In nature, this signaling system is incredibly complex but for our purposes assume there is only one attractant pheromone.

Initially, there are a set of stones that are directly accessible to you. Call these the proximal stones. Some are closer, and some are farther. A priori, since you don’t know anything about the food availability, it’s prudent to prefer stones that are closer. However, you also want to “explore“ the other stones as they could potentially have many grubs, or be close to non-proximal stones which have many grubs. You can send out your 1000 ants randomly to the proximal stones, with probability negatively proportional to the distance.

Once your ants reach the stones, they discover a certain number of beetle larvae. Some stones might have no larvae, others may be filled with them. If you find a good food source, you want some way of relaying that information so that other ants can go and bring some of those larvae back to the colony. This is where pheromones come in.

On the way back from their initial expeditions, your ants will lay down pheromones along the path they visited. The strength of the pheromones is proportional to the amount of food they discovered. Now you have a source of a posteriori information that allows you to send more ants to rocks that were profitable. As these ants collect more grubs, they will in turn lay stronger pheromone trails which reinforce the connection.

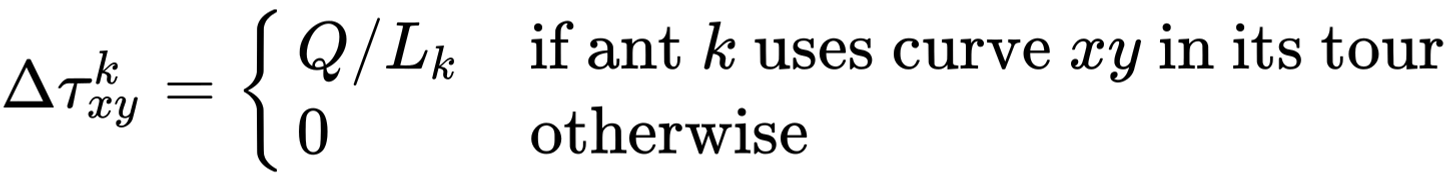

The catch is that the pheromone trails evaporate. Over time, the strength of a connection decays as the chemical evaporates. This evaporation serves an important purpose, which is to prevent overexploitation of resources. In the explore vs exploit tradeoff, if pheromones stayed in place then all the ants would follow the best stones we immediately find without checking to see if there are potentially any better ones out there. When we start to exhaust the proximal stones, we can start searching one step further out at the stones connected to the proximal stones.

You might have guessed that the stones corresponded to nodes, the paths between stones correspond to edges, and ants represent agents. What’s startling is that even though this is a purely contrived example to demonstrate the algorithm, it’s extremely similar to how actual ants behave in the wild (albeit simplified). Isn’t that cool?

A Little Formalism

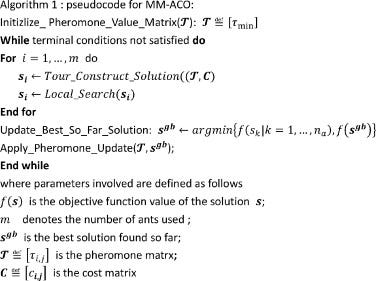

An ant colony optimization problem is one where we need to find the shortest path on a weighted graph. In other words, the path between two nodes that minimizes the total cost, which is the sum of all the edges in the path. If we want to get from node A to node B in the graph, we send out a bunch of “ants“ or agents to randomly try the various paths going between the two nodes. The ants then report back on the efficiency of the paths, and the paths are then compared. A pheromone value is then computed for each edge, based on how useful it was across all the paths investigated. We can then repeat this process, preferentially checking paths that use edges that have high pheromone strength, until we converge on a solution.

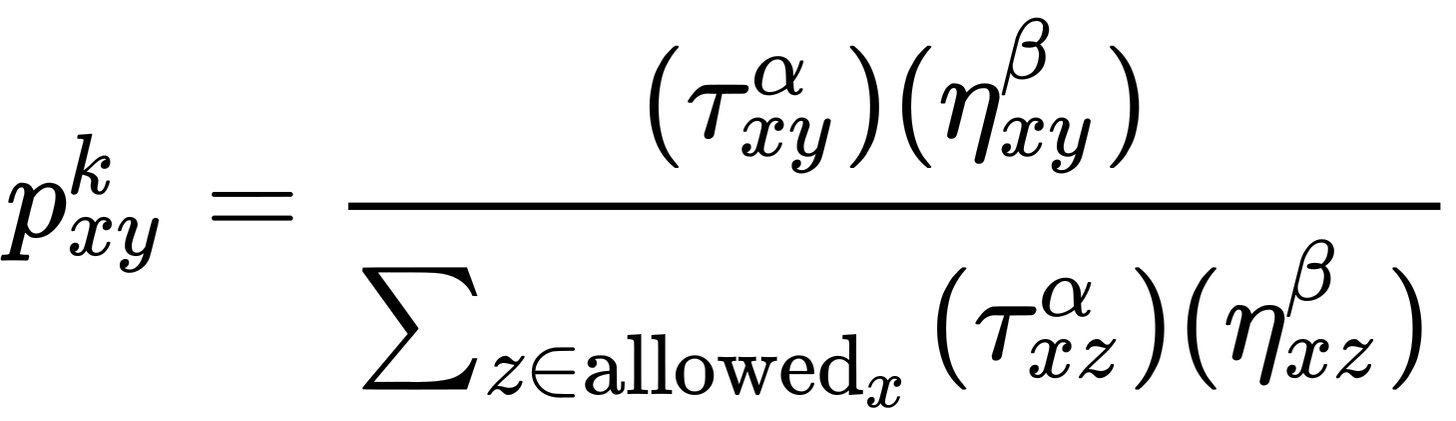

So how do ants or agents choose paths? Well at each given node, we choose our next node based on a combination of its cost, or the weight of the connecting edge, and the pheromone value associated with that edge. The formula itself is pretty simple.

The probability of a move from our node x to y is determined by our two edge values. Tau is the pheromone strength, which is scaled by a coefficient alpha. Alpha represents how much our ant cares about pheromones when it comes to its decision process. Similarly, eta is the cost of the move, scaled by its own coefficient beta. For a given move, this value is divided by the total value across all possible next steps to give a relative probability. Our agent then stochastically chooses a step and repeats, it until it gets to our target node.

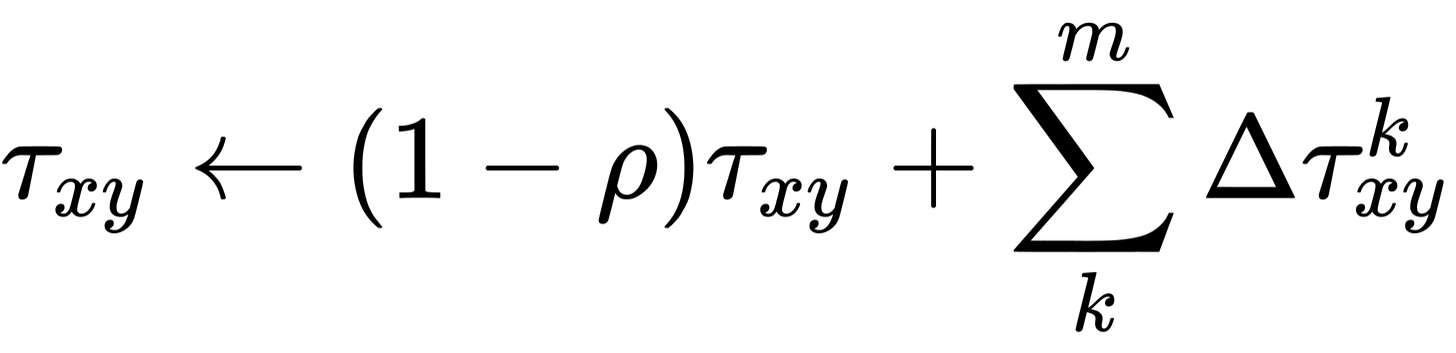

After our agents have chosen their paths based on the above algorithm, we now have to use the information we have gleaned to update pheromones.

Again, the update looks complex but actually isn’t. Rho is the coefficient for how fast the pheromone evaporates. If we set the coefficient to 0.1, then after every update 10% of the pheromone on any given edge will disappear. The other term is the pheromone deposition by ants using an edge in their path. If the ant took a bad path, i.e. one with a high cost and/or many edges, then the pheromone deposited on the edge will be low. This corresponds to the fact that if an edge contributed to a lot of bad paths, it probably isn’t a very good edge.

The converse is also true, where good paths reward their edges with more pheromones. In the formula, L is the total cost of the path and Q is a constant which prescribes some base amount of pheromone deposition regardless of how good/bad the path is. Obviously, if the ant doesn’t visit the edge in its path it won’t deposit any pheromone.

This system allows our colony to efficiently find resources.

Multi-legged Bandits

The Ant Colony Optimization (ACO) strategy is one answer to the classic explore vs exploit problem. What I find really cool about the strategy is that it does this balance on a micro and macro scale.

Each agent can balance its own explore vs exploit by simply changing the alpha and beta parameters. A high alpha value will favor paths with high pheromone values, which is conducive to an exploit strategy. By contrast, a high beta value means an agent will act purely from what knowledge it has available directly in front of it a priori, in terms of observed costs. We can initialize our agents with different alpha/beta values to encourage different explore vs exploit balances, which could improve overall performance.

And indeed, there is some evidence this is how it actually works in the wild. This is a way to conceptualize the specialization we see in ant foragers. Entomologists have long observed differences between ant scouts, recruits, and patrollers in terms of how they seek out resources and how they respond to pheromones. Rather than a complex biological specialization, perhaps the difference is really as simple as tuning a few hyperparameters under the hood.

But in fact, we can go one level further, and change rho and Q. When pheromone evaporates faster, all agents are less capable of exploration. This is because the exploitation incentive decays significantly with each iteration. On the other hand, if Q is high, more pheromone is being deposited on all the paths being explored. This incentivizes global exploration.

In the wild, it might sound impractical to change the pheromone evaporation rate or the rate at which it’s deposited. It’s true that individual ants have little control over both of these facts. However, once again there is evidence that over countless generations, ant colonies evolve to tune these hyperparameters to suit their ecological niche. Isn’t that amazing?

Smarter Colonies

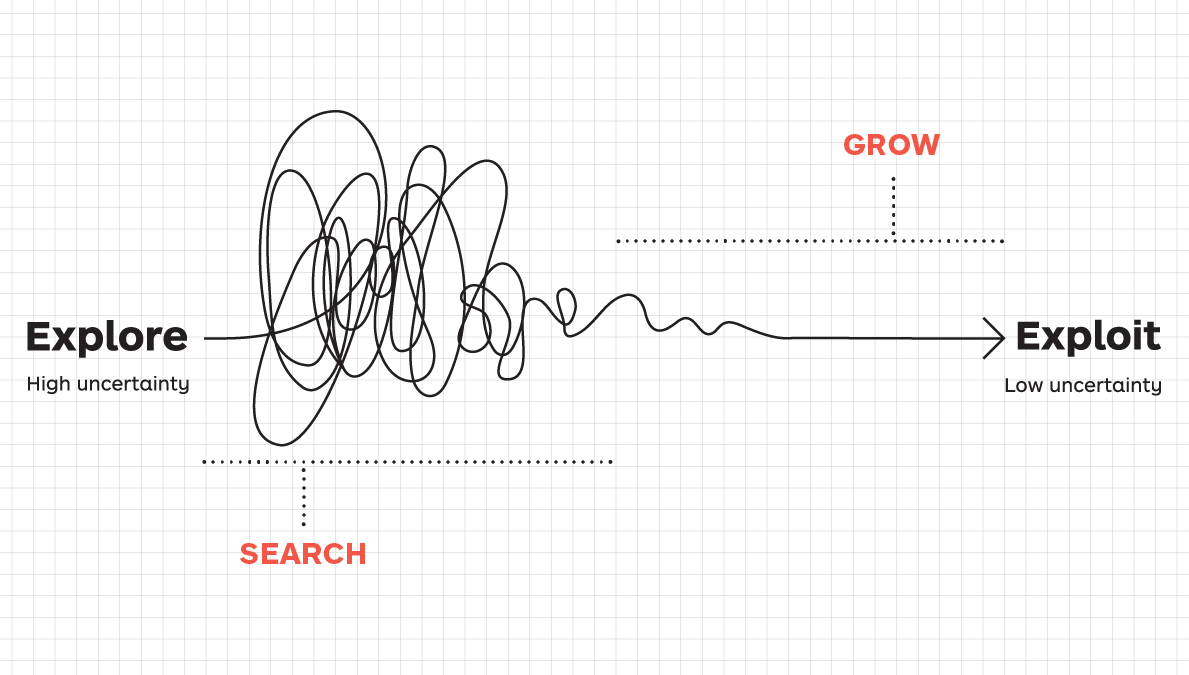

ACO was first formulated in 1992 in Marco Dorigo’s Ph.D. thesis. In the 30 years that have passed since then, there have been significant developments on the initial algorithm. One example is the MAX-MIN ACO algorithm (MMACO).

MMACO primarily differs in the way it updates pheromones. Instead of all ants updating their paths with pheromones after a search, only one ant lays down pheromones. To prevent the search from stagnating, the pheromone values can only lie in the range of the hyperparameters [MIN, MAX], from which the algorithm derives its name. In the beginning, every single edge in the graph is set to MAX pheromone value and either decays or gets reinforced by the MMACO procedure.

The more esoteric ACO algorithms perform even better on specialized tasks but are further distanced from the biological reality of how ants function in the wild. Hu et al., for example, established Continuous Orthogonal ACO (COACO).

COACO is a variation of ACO to solve continuous problems. Whereas our notion of ACO works on the graph as a discrete object, COACO operates in an n-dimensional continuous domain, such as the 2d surface above. COACO is complicated, but at its base level, it involves ants being randomly dropped into regions in the domain where they then explore their surrounding regions. They then communicate using pheromones, except notably that each individual ant can sense pheromones globally even if they haven’t visited that region themselves.

There are many more variations of ACO which are covered excellently in this literature review, so go check it out!

Ants In My Intellectual Pants!

I hope you enjoyed this post on ACOs. In practice, ACO and its variants are widely employed to solve problems ranging from internet traffic to vehicle routing to even image processing. What’s crazy is that ACO could be the gateway to artificial general intelligence (AGI). In this recent paper, an ACO evolution algorithm, which combines ACO with neural networks, is used to implement powerful Collective Intelligence (CI), which then easily solves games like Tic-Tac-Toe and Four in a Row. CI is a stepping stone toward AGI, and these promising results demonstrate that ACO remains a topic of ongoing research.

The cursory, surface-level review of the field of ACO doesn’t really even do it much justice. As such, I have attached a few interesting rabbit holes for you to follow. In my next post, I’ll cover particle swarm optimization and its biological origins. See you soon!

Links: