Using Overly High-Powered Models for Simple Problems (Part 1 of 3)

In this blog post series, we will be writing a Transformer model to solve the Repeated Prisoner's Dilemma problem.

Formulation

The year is 2050 and the world is now engaged in interplanetary conflict with Elon Musk’s Mars Empire (EMME). You and your partner are spies serving in the Space Force, which by now has become the largest branch of the military. On a covert mission to attack Musk’s autonomous vehicle guidance mainframe, you are betrayed and captured by enemy units. They lock both of you up separately in the deep dungeons underneath Mars, where they employ the most gruesome of futuristic torture tactics to get information out of you.

Death is certain.

But before they infect you with a bioweapon that will induce the most excruciating pain imaginable for a week — during which time your organs will swell and eventually burst — they offer you a chance. You can give up every piece of confidential information you know on Space Force. If you give up the information but your partner does not and defect from the Space Force, they will give you an additional 5 months to live as a sign of gratitude. Your partner will be unceremoniously put to death by a virus. On the other hand, if the opposite situation occurs your partner will reap the benefits but you will not.

If both of you decide to betray the Space Force, then you will get to only live for 1 month. After all, if they got all the information they need then they have no further use for the both of you. On the other hand, if neither of you gives up state secrets, you will be kept alive for 3 months so they can extract information.

So what should you do?

Living longer is extremely good. The more time you have, the higher the chance you escape your grisly fate. However, the clock is ticking. It’s time to make your choice: will you stay loyal to the space force or defect to Musk’s evil syndicate?

Introduction

If it was not immediately obvious, the convoluted tale above is a recreation of the famous Prisoner’s Dilemma. The problem has been extensively studied in the field of game theory and there are some very cool results that can arise from a seemingly trivial problem. In particular, this paper by Patrick LaVictoire (which I had the opportunity to hear Mihaly Barasz give a talk on at ESPR) is damn awesome and I would highly recommend a read.

There are no shortages of external resources to research the problem which are revealed by a cursory Google search. There are some interesting spins we can put on the problem, however.

Repeated Games

Normally, the problem is intractable. Without any knowledge of the other agent, you can’t make a well-informed decision. However, you’ve been spying alongside your partner for decades. This isn’t the first time you’ve been caught and put in a similar situation, though that doesn’t make this particular instance any less harrowing. Your partner has a history of how they’ve behaved and similarly, you have a history of how you have responded to past dilemmas. This information allows us to build a prior for how we might expect the other agent to react in this situation.

This is called the repeated prisoner’s dilemma, which is much more interesting. Let’s think of some scenarios of how agents might react.

Cooperate Rock

Your partner isn’t very smart (“dumb as a rock“ as the saying goes). But what he lacks in brains, he makes up for in loyalty. No matter how dire the situation or how you have behaved in the past, he will never betray the glory of the Space Force. He will always cooperate with you against Musk’s tyranny.

Assuming you are not so irrationally inclined, your best interest is always to give up the secrets. Your partner’s noble sacrifice would give you the time you need to escape.

Defect Rock

Your partner again is lacking in mental facilities. This time, he is instead a conniving bastard who will betray you the first chance he gets. Similarly, it is again in your best interest to give up secrets. If you were to stay silent, your partner’s duplicitous nature would be awarded 5 months of life while you would meet your untimely end. Betrayal guarantees you a month to survive, so that hope is not totally lost.

Tit for Tat

Now your partner is a little bit smarter. He believes strongly in Hammurabi’s Code, which famously proclaimed “an eye for an eye“. Think back to the last time you were in a similar situation. If you remained loyal to the Space Force and cooperated, your partner will remember your commitment and reciprocate the action this time around. If you had betrayed your kin last time, he will come back with a vengeance and give up secrets this time around.

In such a situation, you want to stay on good terms and always cooperate across situations. That way your partner trusts you, and will always cooperate with you, giving you 3 months to escape together. However, if you betrayed your partner, it can exact a heavy price.

Other Agents

There are infinite other viable strategies that your partner could use. For example, your partner might choose to cooperate purely randomly. They could also cooperate based on a complex pattern in your history, like if you had cooperated at least 6 times in the past 8 instances. It’s difficult to know what strategy your partner will use.

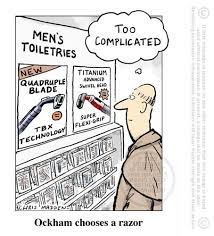

Occam’s Razor

Since we can’t know which of these infinite strategies accounts for your partner’s history, we will opt to use the simplest set of rules that completely describe the history. This is based on a popularized principle known as Occam’s Razor. The principle states that given two models, A and B, such that the performance of the models is exactly equal in all data, the simpler model is preferable.

This means the strategy that is based on fewer rules is the one we want to learn. But how do we analytically determine these strategies and what makes this problem so interesting?

Next Steps

This was my first blog post of hopefully many, and I hope you enjoyed it! In the next blog post, I will formalize the problem and strategies mathematically and derive a continuous implementation, as well as lay the foundation for our ML model.

this is so cool

dhruv ur a god I love you <3